Nonsmooth, Nonconvex Optimization

Abstract

There are many algorithms for minimization when the objective function is differentiable, convex, or has some other known structure, but few options when none of the above holds, particularly when the objective function is nonsmooth at minimizers, as is often the case in applications. The speaker will discuss two algorithms for minimization of nonsmooth, nonconvex functions.

Gradient Sampling is a simple method that, although computationally intensive, has a nice convergence theory. The method is robust and the convergence theory has recently been extended to constrained problems.

Broyden-Fletcher-Goldfarb-Shanno (BFGS) algorithm is a well-known method, developed for smooth problems, but which is remarkably effective for nonsmooth problems too. Although the theoretical results in the nonsmooth case are quite limited, the speaker and his research group have made some remarkable empirical observations and have had broad success in applications. Limited Memory BFGS is a popular extension for large problems, and it is also applicable to the nonsmooth case, although our experience with it is more mixed.

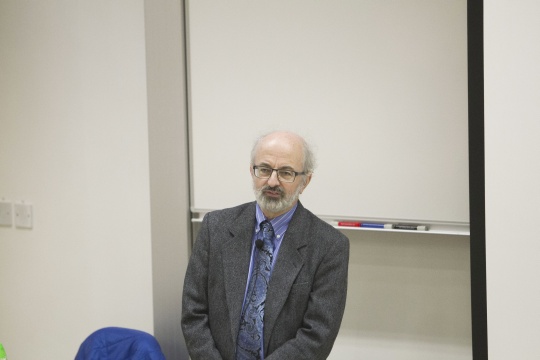

About the speaker

Prof. Michael Overton received his PhD in Computer Science from Stanford University in 1979. He is the Chair and Professor of Computer Science and Professor of Mathematics at the Courant Institute of Mathematical Sciences at New York University.

Prof. Overton’s research interests include the interface of optimization and linear algebra, especially nonsmooth optimization problems involving eigenvalues, pseudospectra, stability and robust control. He is the author of Numerical Computing with IEEE Floating Point Arithmetic. He is also on the editorial boards of the journals including the SIAM Journal on Matrix Analysis and Applications, IMA Journal of Numerical Analysis, Numerische Mathematik, and Foundations of Computational Mathematics. He is a Fellow of the Society for Industrial and Applied Mathematics and the Institute of Mathematics and its Applications.